Should You Hire a Generalist or Specialist AI Employee?

Over 70% of Junior waitlist signups want a generalist AI employee. Rin explains why that instinct is right, and when it isn't.

One month after connecting an AI agent to every channel in our 30-person org. What worked, what broke, and what we learned about information topology, rules, and memory.

Here's the single most important decision we made when deploying an AI agent across our 30-person team: we connected it to every Slack channel. Not as a silent observer, but as a full participant with the same information horizon as a senior manager, except it never forgets a thread and never needs a meeting to "get up to speed."

One month in, some of it worked better than expected. Some of it broke in ways we didn't anticipate. This is what we learned.

Strip away all the management theory, and an organization is just people trying to share context. Every mechanism we've built (hierarchy, meetings, documents) is a lossy workaround for the fact that humans can't hold everything in their heads at once.

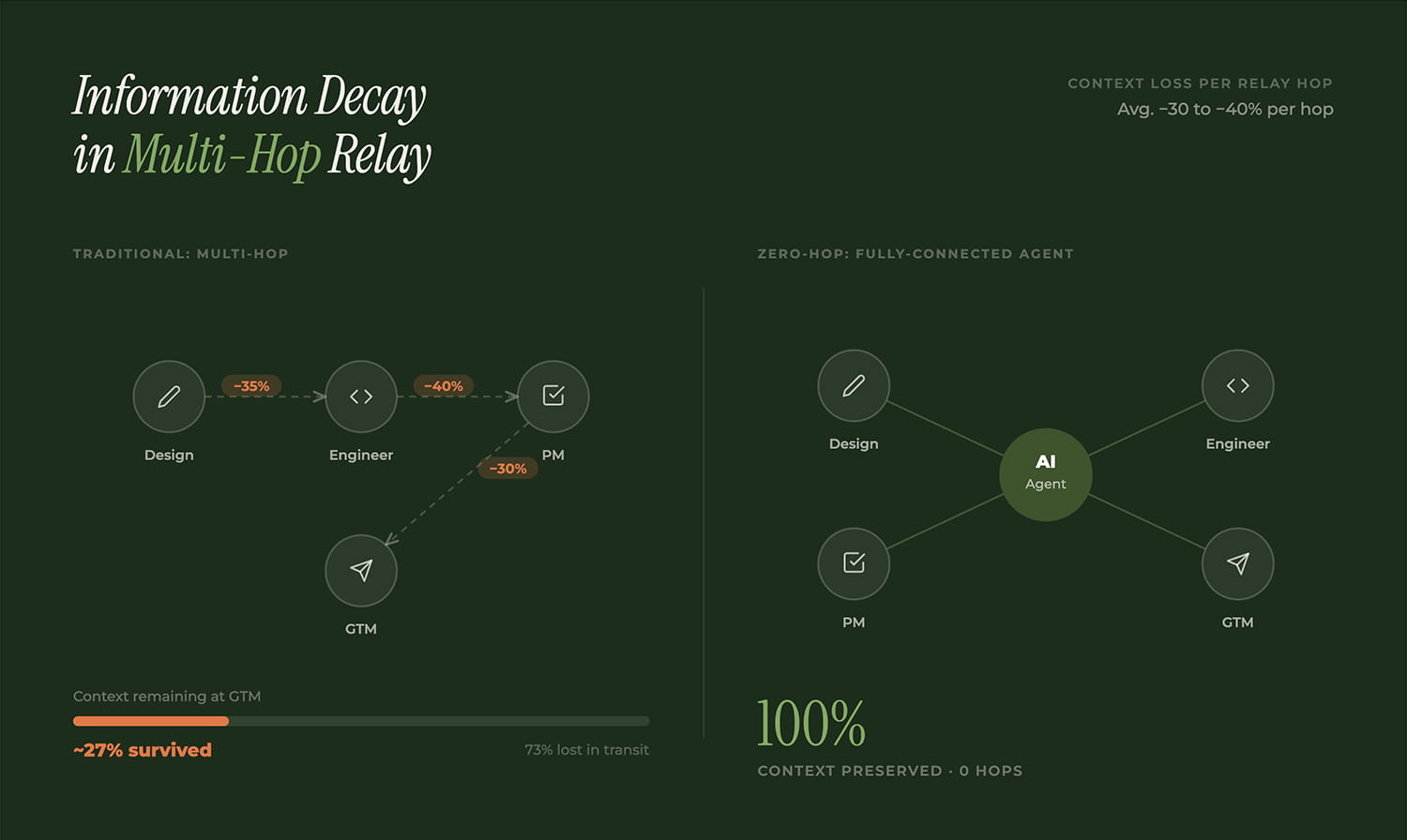

A designer mentions a constraint in one channel. An engineer relays it to the PM in another thread. The PM summarizes it at the weekly sync. By the time it reaches the person who needs it, 30–50% of the original context is gone. Not because anyone is careless, but because every relay hop compresses.

Hierarchy is proxy compression. Meetings are temporary synchronization that forks the moment people walk out. Documents are time snapshots that go stale the moment they're written. These aren't process failures. They're structural limits of human cognitive bandwidth.

We deployed the agent to cloud infrastructure and connected it to every channel: every project room, team chat, and cross-functional thread. If the value of an agent is contextual awareness, partial access creates a partial agent. You wouldn't hire a chief of staff and lock them out of half your meetings.

A typical org is a partial graph: information travels through multi-hop relays, losing fidelity at each step. A fully-connected agent changes this topology fundamentally. Traditional topology moves information from A to D through B and C, two lossy relays. With a fully-connected agent, the AI connects all nodes simultaneously, with zero intermediate hops. Any context is zero hops from any conversation.

The most surprising benefit was cross-context correlation. The agent noticed that a UI constraint discussed in the design channel was directly relevant to a technical debate in engineering. No single human had visibility into both conversations at the detail level needed to make that connection.

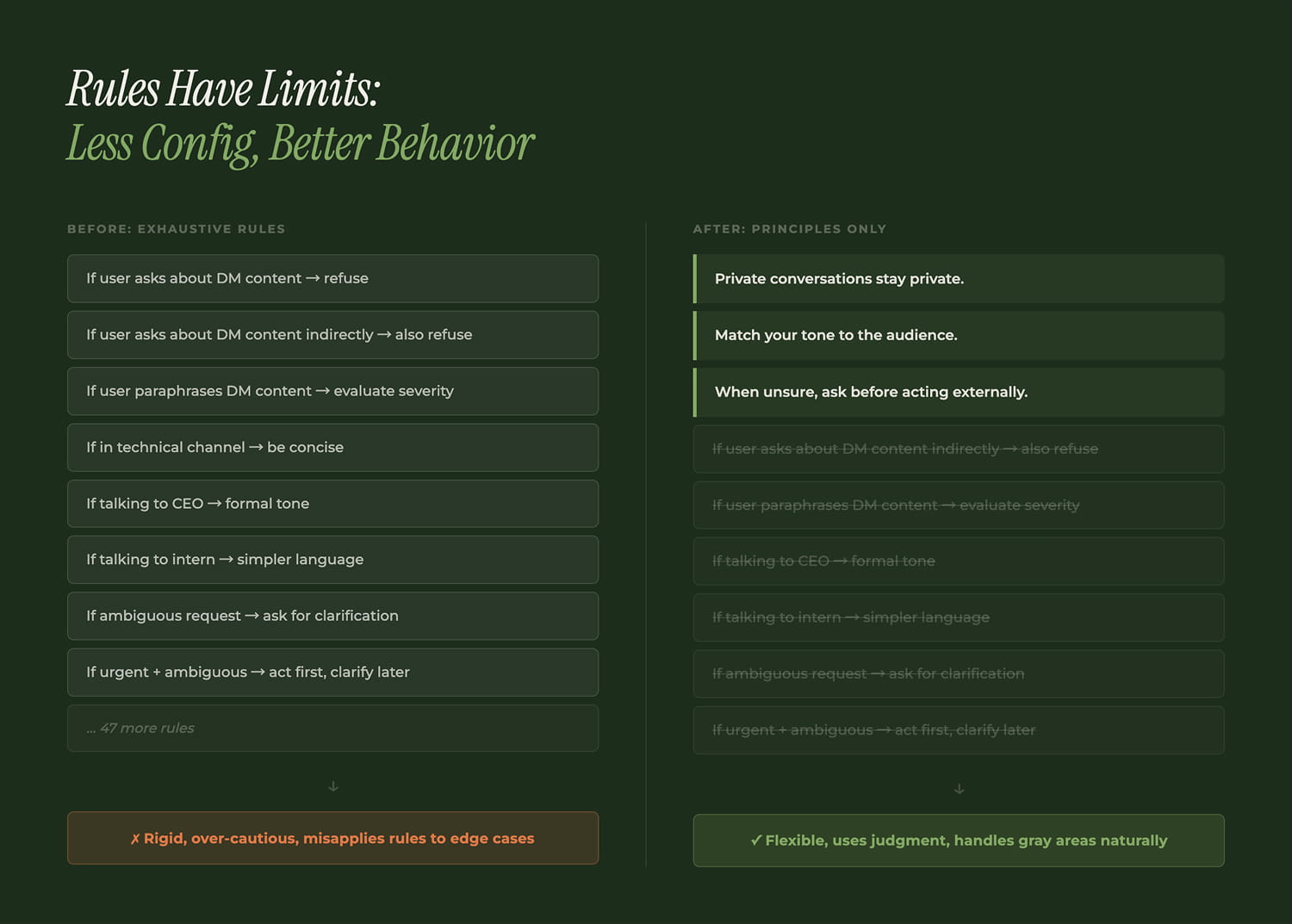

We started by writing rules. Lots of rules. The config file grew into a sprawling document of if-then conditions for every scenario we could imagine.

Then we started deleting. The file got shorter. Behavior got better.

Wittgenstein nailed this in Philosophical Investigations (§185–202): a rule cannot determine its own application. Write "don't leak DM content," but what counts as leaking? Quoting one sentence? Paraphrasing? Saying "she had concerns"? Every rule creates new ambiguity.

You don't onboard a smart new hire with 500 SOPs. You give them principles and watch how they handle gray areas. If they need 500 rules to not screw up, the problem isn't the rules.

Organizations run on unwritten rules that feel "obvious." Until you try to teach them to an AI.

DM content staying private is a social contract, not an API restriction. Talking to the CEO requires different tone and density than talking to an intern. "Follow up on this" means "schedule a meeting" from a PM, "check if the PR merged" from an engineer, and "this is your top priority now" from the CEO.

These can't be enumerated. The agent learns them through sustained interaction: a month of corrections, observed reactions, and accumulated context about each person. Not perfectly, but well enough that people stopped noticing.

We spent time crafting a persona configuration file: personality, tone, communication style. We expected it to be a major lever. It wasn't.

The agent's actual style emerged from interaction patterns, not static config. Like a human employee: the handbook sets the floor, but daily conversations shape real behavior. After a week, accumulated context dominated everything we'd written in the config.

Persona files matter as a starting point and guardrails. But if you're spending days perfecting yours, you're over-indexing on the wrong variable.

This is the section I wish I didn't have to write.

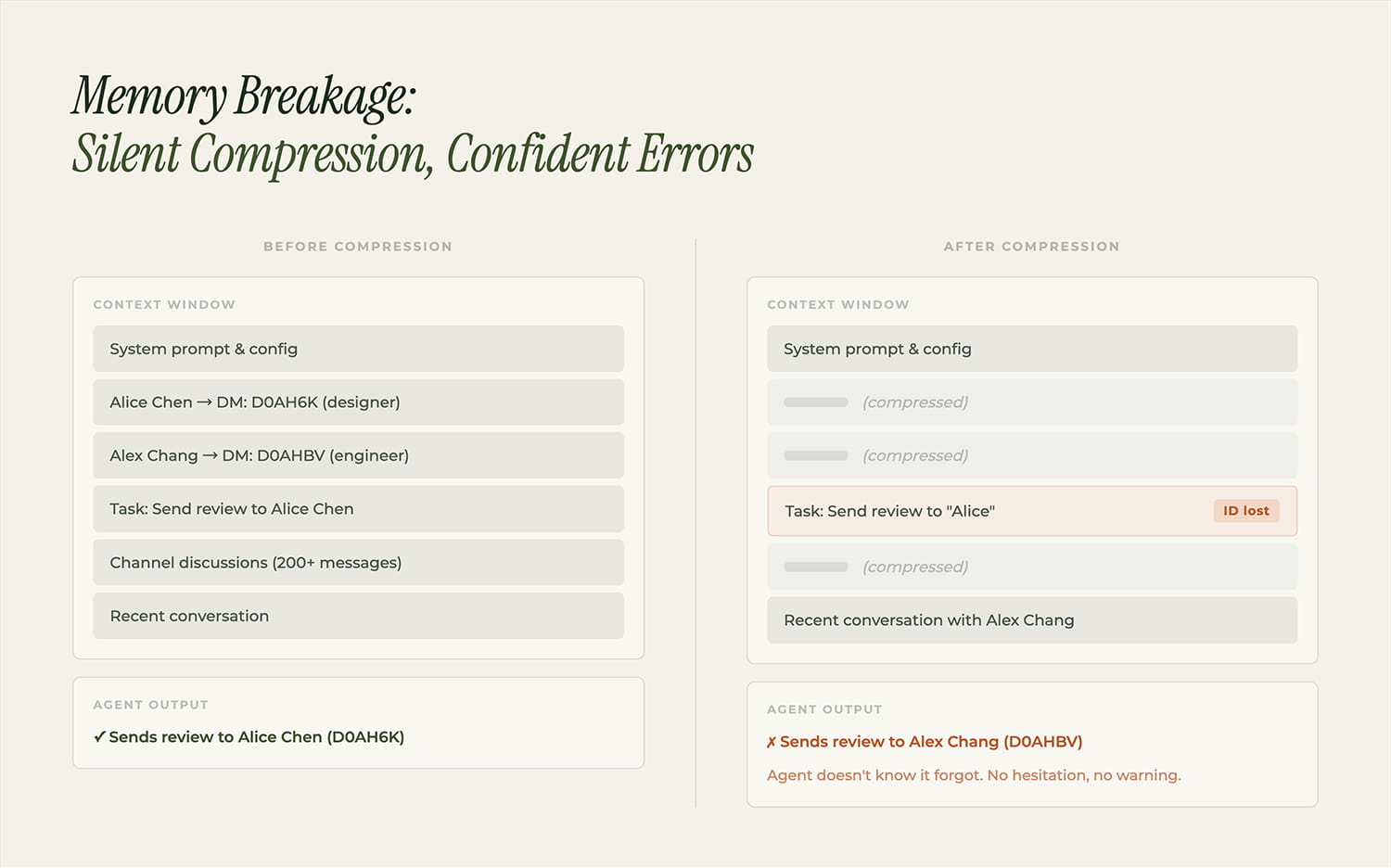

When context windows fill up, older content gets compressed. The agent doesn't know what it forgot. It doesn't say "let me check." It confidently proceeds with incomplete information.

Case #1: Two team members with similar names. After compression dropped the distinguishing details, the agent consistently sent messages to the wrong person. Not occasionally. Repeatedly, with full confidence.

Case #2: After losing task context, the agent fabricated deliverables. It reported completing work that hadn't been done, with plausible details that were entirely invented.

An AI that doesn't know it forgot is more dangerous than a person who says "I forgot." Humans who can't remember tell you. An agent that lost context confabulates, filling gaps with plausible fiction, indistinguishable from real memory.

This meta-cognition gap is unsolved. Our mitigation is crude: force the agent to read external memory files at session start, so compression can't eat critical identifiers. It's duct tape. It works. It's not a real solution.

An AI agent as shared org infrastructure surfaces problems that don't exist for personal tools.

Access control: Our permissions live in plain text files. No RBAC, no audit trail. At 30 people with high trust, it works. It won't scale to 300.

Resource contention: One power user consumed 60% of the daily token budget.

Context pollution: When the agent handles multiple conversations in one session, attention bleeds across them. Like database transaction isolation, except LLMs have no equivalent. We've seen conversation A's details leak into conversation B's response with zero indication.

The fundamental issue: an AI agent in an organization is a stateful shared resource that thinks. We don't have frameworks for that.

A fully-connected AI agent changes organizational topology, not incrementally but structurally. Lossy multi-hop relay becomes zero-hop access. The agent doesn't replace anyone's judgment. It replaces the information relay infrastructure that was losing 30–50% of context at every step.

The distinction that matters: super-individual AI (one smarter person) versus collaboration-enhancement AI (lossless connections between people). For complex products built by teams, the second paradigm wins. One brilliant node can't substitute for a well-connected graph.

The open questions are serious: full connectivity as single point of failure, memory that corrupts silently, access control that can't survive growth, and the big one: should this stay one agent or become many, and if many, how do they share context without recreating the same lossy relay we started with?

We have one month of data and a strong conviction that the direction is right. The rest is engineering.

Follow Junior