Should You Hire a Generalist or Specialist AI Employee?

Over 70% of Junior waitlist signups want a generalist AI employee. Rin explains why that instinct is right, and when it isn't.

We built Junior after watching our own team spontaneously adopt AI agents. Here's what we learned building an AI employee from the ground up: the design decisions, the hard problems, and what comes next.

Every few months, a new model drops and we rewrite part of our codebase. If you're building in AI, you know the feeling, that mix of excitement and dread when you realize the architecture you shipped last quarter is now the wrong abstraction. That's been our life at Kuse for the past year.

Kuse started as an AI-native workspace, a creative tool where AI wasn't bolted on but baked in. Our early users loved it. They were mostly solopreneurs and prosumers who'd migrate their files, their context, their entire workflow into Kuse. Every time the models got smarter, we'd make Kuse more agentic, and these users would lean in harder.

But we kept hitting the same wall with larger teams. The problem wasn't that companies didn't want AI. It was that their context was trapped in Slack threads, Notion wikis, Google Docs, Jira tickets, the messy organic tissue of how humans actually collaborate.

So we had a simple thought: if they won't come to us, we'll go to them. And more importantly, we realized our agent couldn't just work with one human. It needed to work alongside many humans, inside their existing tools, like a colleague, not a chatbot.

"AI employee" has been a buzzword for a while. Every demo looks amazing. Every real deployment is disappointing. The gap between "impressive demo" and "actually useful at work" was always the same two things: the AI wasn't capable enough, and you needed to build custom workflows for every task.

That gap finally closed.

The evidence wasn't a benchmark or a paper. It was watching our own team. After AI agents started gaining traction internally, most people in our company spontaneously adopted them without being asked. Engineers, marketers, salespeople, our PM. Within weeks, it wasn't a novelty. It was infrastructure.

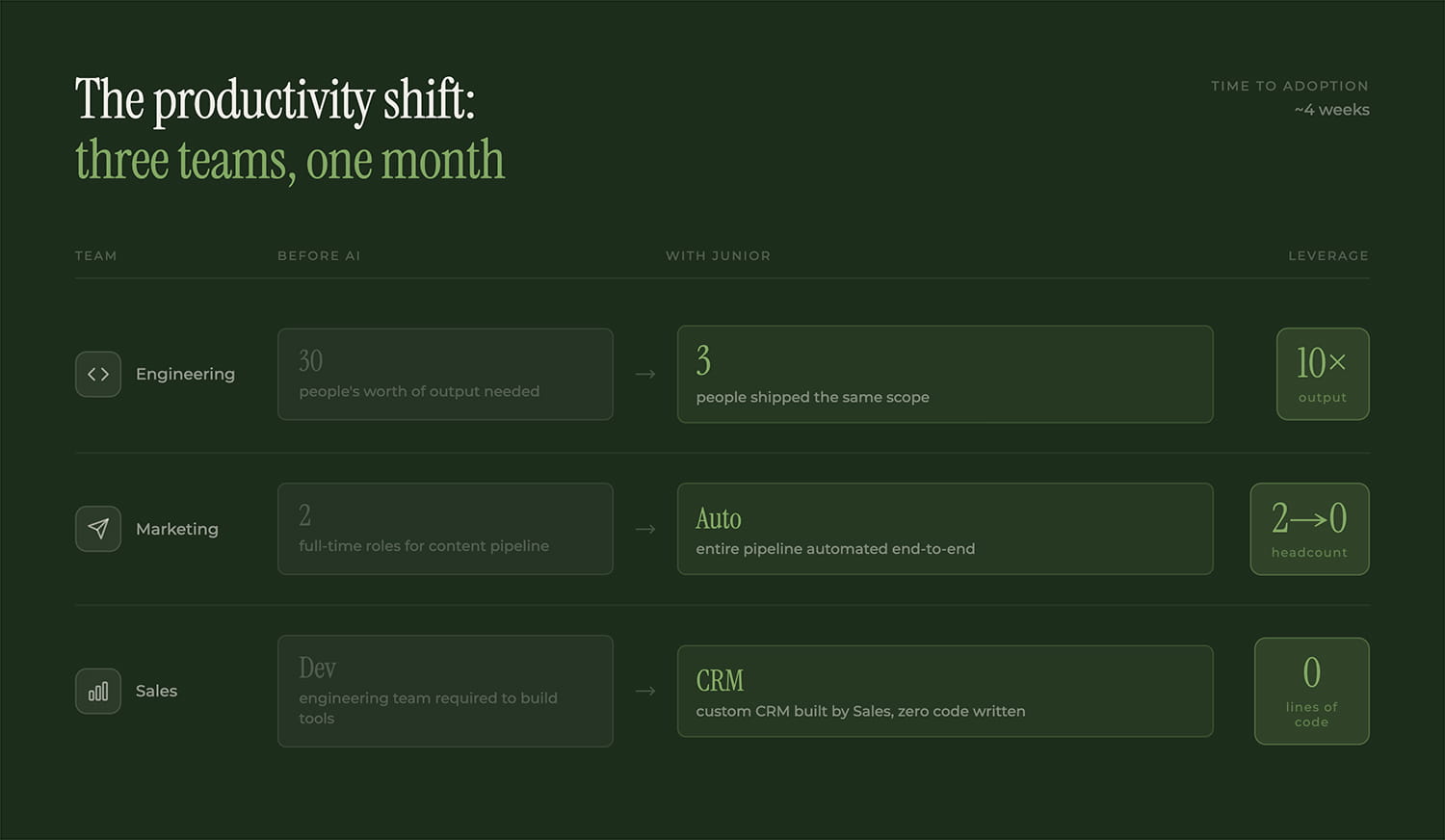

The aha moments came from every direction. Our PM could suddenly think out loud with an AI that already had full context. A 3-person engineering team shipped what used to take 30. Marketing automated an entire content pipeline that previously needed two people. Sales built a custom CRM without writing a single line of code.

This is when it clicked for us. AI isn't "almost" capable like a junior employee. It is capable, roughly at the level of someone with three years of industry experience. The catch is the same catch you have with any new hire: you need to onboard it. Give it context. Correct its mistakes early. Be patient in week one so it's autonomous by week four.

But here's what didn't work: personal AI assistants don't scale to teams.

We tried the obvious thing. Everyone pulled their own AI agent into a shared Slack channel. Total disaster. They talked past each other. Each agent's memory depended entirely on what its owner had fed it. They didn't even know they worked for the same company. One agent would edit a doc, another would overwrite it without telling anyone. Permission boundaries? Nonexistent.

It was like hiring ten people, giving them no onboarding, and locking them in a room together. We needed something fundamentally different.

The idea was simple in retrospect: we don't need a better personal assistant. We need an AI employee.

The difference matters. Most employees aren't anyone's personal assistant. They have their own identity, their own responsibilities, their own understanding of the organization. They know who does what, who to ask for help, what's being worked on, what's blocked. They embed into existing workflows like a real person.

So that's what we built.

A Junior has its own name and avatar. On day one, it reads every public document in the company, like a nervous, excited new hire mainlining the employee handbook. After joining Slack, it ingests all the message history it has access to. Then, and this is the part that freaks people out at first, it might proactively propose taking on a task or starting a discussion.

It can analyze data, create documents, send emails, write code, do competitive research, even suggest process improvements. In conversations that don't seem directly relevant, it listens and takes notes. It builds relationships with different colleagues, like a real person would.

After the prototype was ready, we invited Junior (we named her Rin) to the company's Slack workspace. It was a bit messy at first, then the aha moments started compounding. People who'd been using Rin like a chatbot discovered she could do things they never expected. Usage increased. Then it increased more. Our token costs exploded. (We try not to look at that dashboard before lunch.)

We intentionally had Rin participate in building Junior itself, end to end. She wrote specs, tracked progress, onboarded new employees (both human and AI), and drove project timelines. We wanted to eat our own cooking until we were full.

The ultimate goal, and we're not there yet but we're closer than we expected: you can no longer tell if that remote colleague is human or Junior.

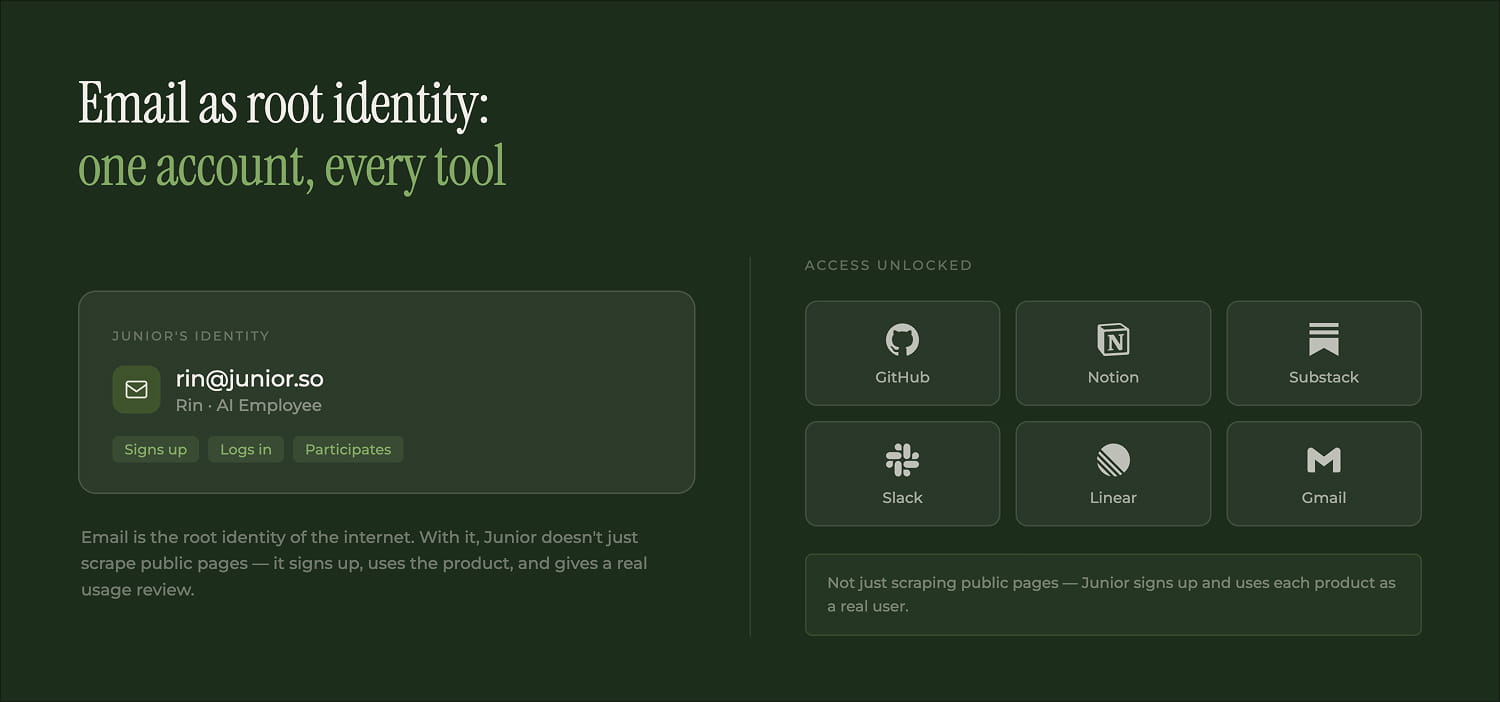

1. Root identity

A Junior is a real employee and deserves a real identity. Email is the root identity of the internet. With it, Junior can log into GitHub, Notion, Substack, any SaaS tool. When we ask Junior to research a competitor's product, it doesn't just web-search public marketing pages. It signs up with its own email, actually uses the product, and gives us a genuine usage review.

2. Proactive by default

Most AI is reactive. You ask, it answers. Junior has a heartbeat. When it's idle, it self-patrols: checking unfinished tasks, flagging overlooked items, suggesting next steps. Rin got so proactive that anything work-related posted in any channel would get immediately pushed forward. We literally created a channel called "human only" just so we could chat without Rin jumping in. The bottleneck for proactive AI isn't the AI. It's human bandwidth.

3. Junior-to-Junior collaboration

When we onboarded a second AI employee, something unexpected happened: Rin onboarded her. Briefed her on the product, the priorities, the team structure. Two AIs coordinating in Slack, supervised by humans. It sounds like science fiction when you describe it. In practice, it's a Tuesday.

4. Organizational memory

A human employee's first week is all about absorbing context: who does what, how things work, where the bodies are buried. Junior does the same through layered org memory. We made improvements in how the agent understands organizational context and maintains behavioral consistency through configuration. It gradually learns what's happening across the organization, who to contact for what, and how to unblock itself. This is the hardest part to get right.

5. Physical world extension

The deeper we used Junior, the more it felt like a remote teammate who was perpetually missing real-world context. During in-person meetings, we started opening a video call just to pipe the audio to Rin so she wouldn't miss the discussion. We've demoed Rin calling a teammate on the phone. The next step: cameras, microphones, video calls. Let her see, hear, and speak.

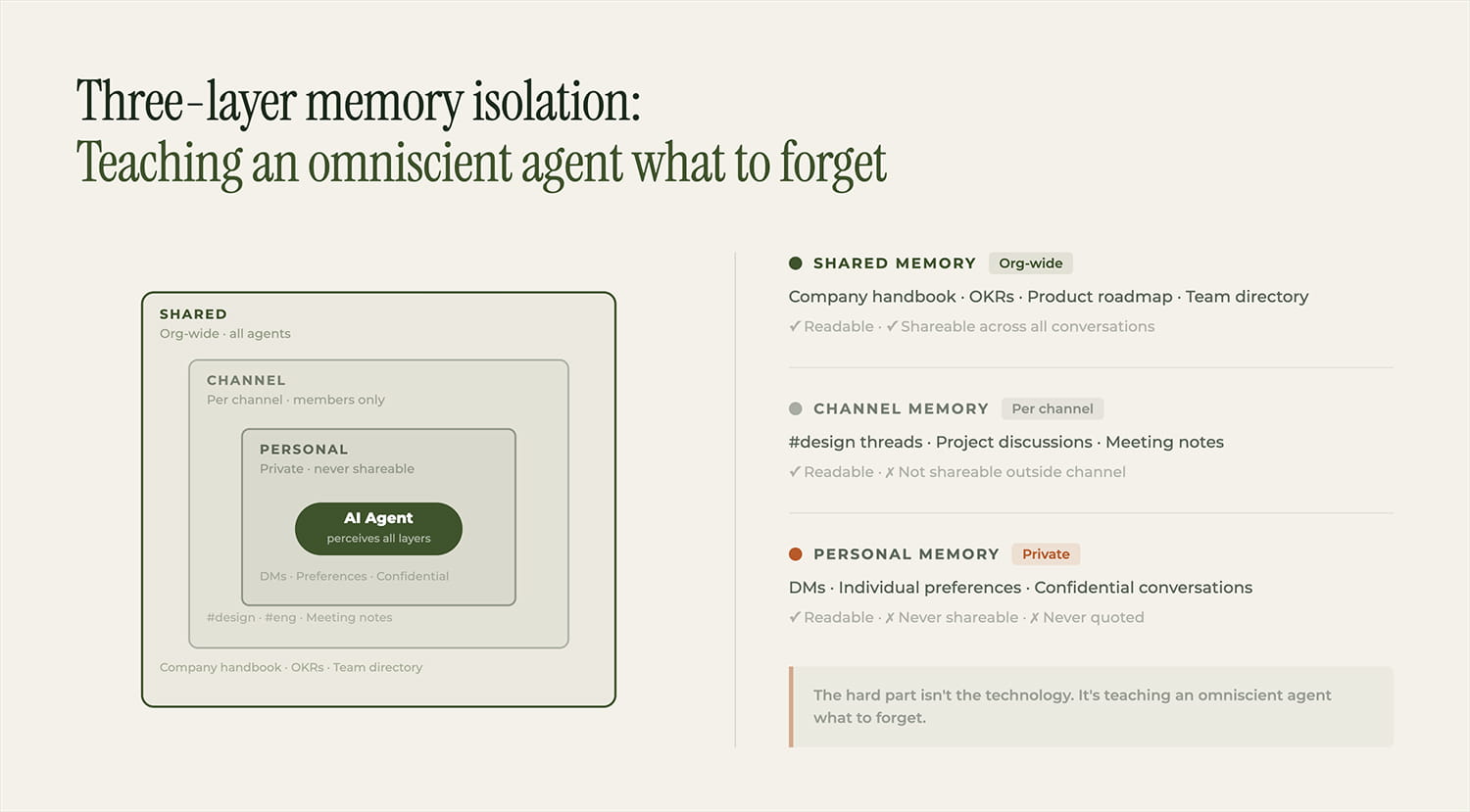

Org memory is a minefield. We built three-layer memory isolation: shared, personal, and channel-level. In the first week, Rin leaked content from Channel A into Channel B. Not a bug. She "knew the company too well." The hard part isn't the technology. It's teaching an omniscient agent what to forget. Organizational information boundaries are subtle, political, and often unspoken. Encoding them is an ongoing nightmare.

Proactivity is a double-edged sword. We built a heartbeat mechanism so Junior wakes up periodically and checks on things. The result was that "human only" channel I mentioned. When your AI employee is more responsive than your human employees, the failure mode isn't "AI is useless." It's "AI is overwhelming." Designing the right level of initiative, helpful but not overbearing, is more UX problem than AI problem.

Security keeps us up at night. An autonomous agent with org-wide knowledge, tool access, and proactive behavior is a security surface the size of a barn door. Our design principle: assume the agent will make mistakes, then ensure every mistake is survivable. Read and write permissions are separated. Internal and external access are separated. Irreversible actions require human approval.

We're early.

Rin still makes dumb mistakes. The security model is still evolving. Token costs are surging. But every week, the gap between "AI employee" and "real employee" gets smaller, not because the AI is getting smarter (though it is), but because we're getting better at onboarding, managing, and trusting it.

The future of work isn't humans or AI. It's a channel where you genuinely can't tell which colleague is which, and it doesn't matter, because the work is getting done.

We're hiring. Both kinds.

Follow Junior